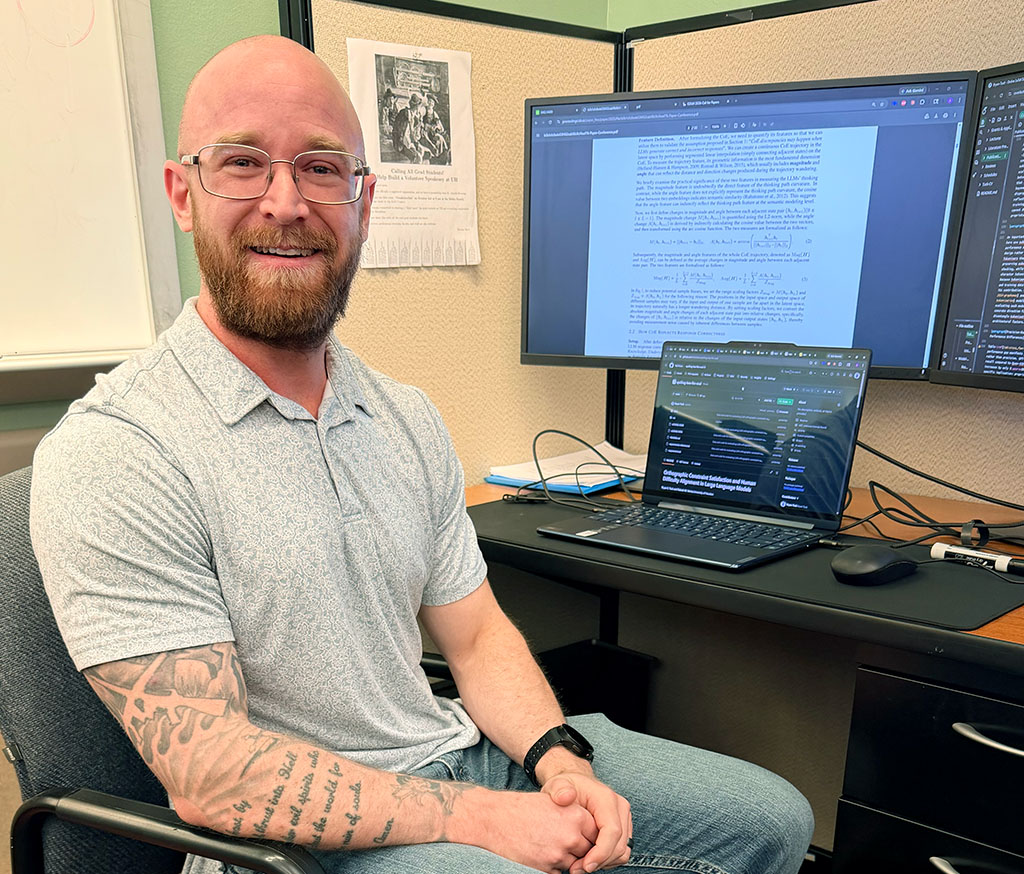

Bryan Tuck, a third-year Ph.D. student in the University of Houston’s Department of Computer Science and a researcher in the Reasoning, Data Analytics and Cybersecurity (ReDAS) lab, is gaining recognition for research that addresses one of artificial intelligence’s most pressing challenges: making AI systems more secure and reliable.

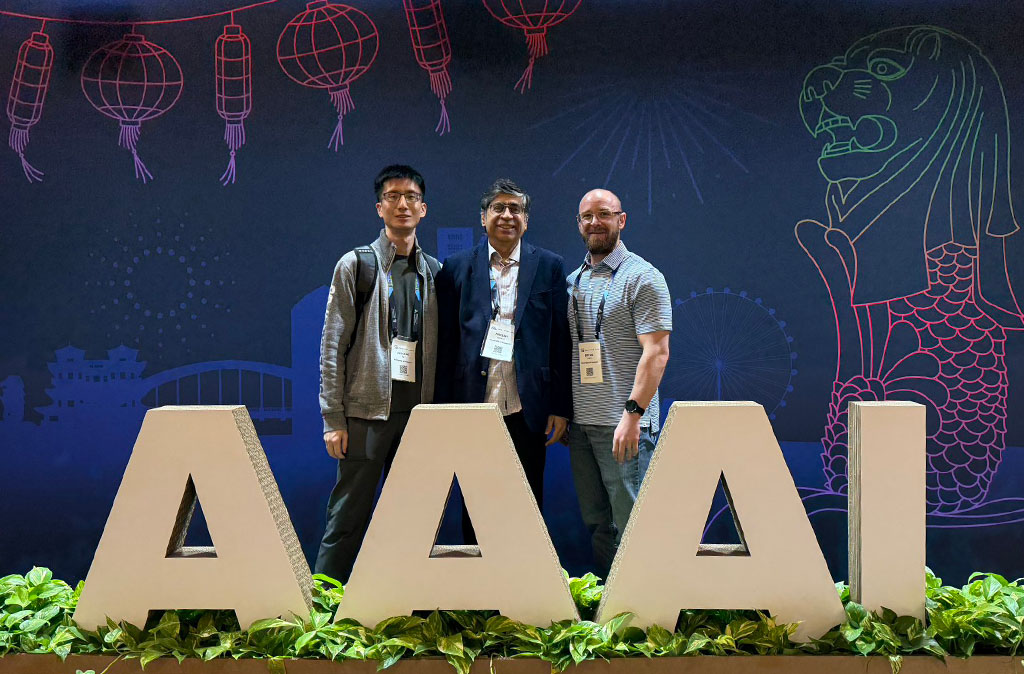

Tuck recently earned the COSC Junior Ph.D. Student Award and published a first-author paper at the highly competitive AAAI 2026 conference, one of the leading international venues for artificial intelligence research. He has also been accepted into a Department of War (formerly the Department of Defense) summer internship program, where he will continue work related to AI security and robustness.

Tuck’s research focuses on adversarial machine learning, a field that examines how small, often imperceptible changes to input data can cause AI systems to produce dramatically different results.

“These tiny changes might be invisible to humans, but they can significantly affect how an AI system interprets information,” Tuck said.

A commonly cited example involves autonomous vehicles. If a stop sign is altered with a small patch or pattern, a vehicle’s computer vision system could fail to recognize it, potentially leading to dangerous outcomes.

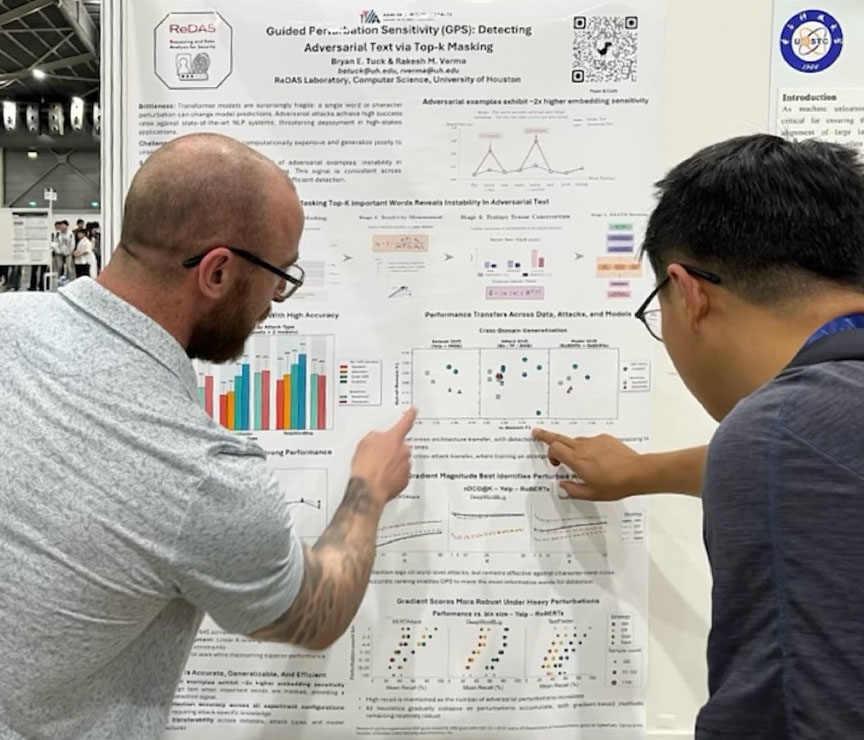

Tuck’s work explores how the internal behavior of AI models changes when they encounter these adversarial inputs. His research found that when an AI system processes manipulated data, it produces instability within the model’s internal structure — a signal that can be used to detect malicious inputs.

“When you run a normal input through the model, the internal activity is relatively stable,” he explained. “But adversarial inputs create instability inside the model. That instability can serve as a signal that something malicious is happening.”

Unlike many previous approaches that defend against a single type of attack, Tuck’s method is designed to generalize across different models and unseen attacks. This flexibility makes the system more practical for real-world applications, where attackers constantly adapt their techniques.

The research also emphasizes efficiency, ensuring security mechanisms do not slow down systems to the point where users abandon them.

“You have to consider usability,” Tuck said. “If a security system is so slow that users have to wait a long time for results, they’re not going to use it.”

Tuck credits much of his research perspective to his nontraditional path to academia. Before attending college, he served four years in the U.S. Army. That experience exposed him to adversarial environments, where systems are constantly tested and attacked — a mindset that now shapes his research in cybersecurity and artificial intelligence.

His advisor, computer science professor Dr. Rakesh M. Verma, and colleagues in the ReDAS lab have also played an important role in shaping his work.

“Research can feel solitary because you’re focused on a very specific problem,” Tuck said. “But it really takes a team — your advisor, lab mates, and other faculty — to challenge ideas and help refine your work.”

The AAAI conference acceptance marks a major milestone in his doctoral journey. The conference receives thousands of submissions each year and accepts only a portion of them, making it one of the most selective venues in the field.

For Tuck, the recognition from both the conference and the COSC Junior Ph.D. Student Award serves as validation of the work he and his collaborators have been doing.

“There’s always some level of imposter syndrome when you’re a Ph.D. student,” he said. “Receiving the award showed me that I’m on the right track.”

This summer, Tuck will participate in the Department of War internship program, where he plans to continue research on adversarial machine learning and improving the robustness of AI systems.

The broader goal of his work is to ensure AI technologies remain both powerful and safe as they become increasingly integrated into everyday life.

“These systems are incredibly capable and incredibly helpful,” he said. “But right now they are also fragile. Our goal is to make them more robust and secure.”

Looking ahead, Tuck hopes to continue working at the intersection of artificial intelligence and national security. In the long term, he envisions a career at a national laboratory or research institution where he can lead projects focused on protecting critical systems.

He also hopes to mentor future researchers along the way.

“I’d like to eventually run my own research lab and help train the next generation,” he said.

For students interested in pursuing research careers, Tuck encourages persistence and curiosity.

“Don’t be afraid to take the shot,” he said. “Research is very different from coursework — you’re not just learning knowledge, you’re creating it.”